How to Land an AI Engineering Job in 2026

Webinar starts in

00DAYS

:00HRS

:00MINS

:00SEC

Most Recent

Most Popular

Highest Rated

Reset

Articles

view all ⭢cost_effective_ai_models

zaya1_8b_performance

edge_ai_applications

ai_math_accuracy

ai_inference

small_parameter_model

llm_efficiency

90percent_confidence_accuracy_gap

ai_confidence_misrepresentation

ai_model_calibration_issues

ai_confidence_scores_explained

softmax_calibration_gap

overconfident_ai_risks

ai_model_overconfidence

99percent_confidence_ai_models

knowledge_graph

rag_techniques

ai_inference

ai_agents_pdf_conversion

prompt_engineering_techniques

structured_data_extraction

llm_applications

pdf_data_automation

efficient_ai_workflows

ai_hallucination_prevention

llm_context_optimization

prompt_engineering_techniques

advanced_ai_frameworks

fine_tuning_llms_techniques

cursor_v0

ai_cost_reduction

rag

ai_token_management

reduce_ai_agent_errors

ai_agent_statefulness_techniques

improve_ai_agent_accuracy

fix_ai_agent_forgetfulness

ai_agent_forgetfulness_solutions

ai_agent_memory_solutions

3_layers_to_fix_ai_forgetting

healthcare_ai

ai_hallucinations

ai_inference_accuracy

llm_fine_tuning_techniques

agentic_rag

clinical_decision_making

prompt_engineering_techniques

rag_technology

prompt_engineering_techniques

agentic_rag

ai_agents

agentic_systems

llm_products

static_rag

ai_workflows

ai_inference_tools

rag_limitations

cross_source_synthesis

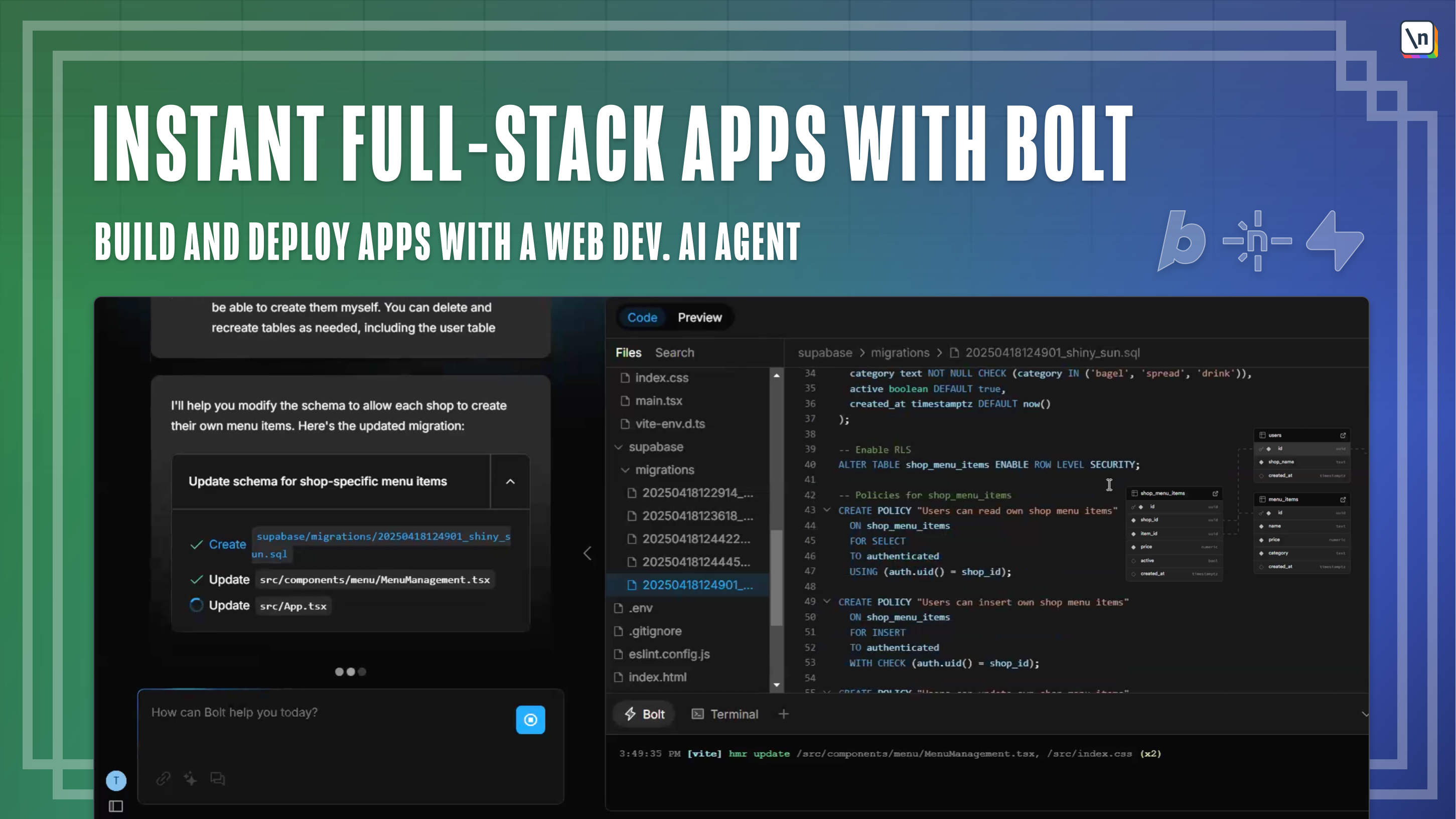

ai_coding_platform

role_based_access_control

claude_chat

code_review_ai

ai_chat_tools

ide_integration

ai_debugging

ai_accuracy

structured_data_provenance

knowledge_graphs

ai_hallucinations

vector_rag

enterprise_ai_compliance

graphrag

multi_hop_reasoning

vae_tutorial

probabilistic_models

vaes_explained

vae_applications

ai_drug_discovery

generative_ai_models

synthetic_data_generation

ai_inference_costs

ai_deployment_costs

reasoning_models_increase_inference_costs

llm_performance_optimization

llm_inference_expenses

ai_model_token_costs

reasoning_model_efficiency

prompt_engineering_techniques

next_gen_ai_trends

ai_accuracy_improvements

rag_frameworks

llm_fine_tuning_methods

ai_inference_tools

rag_framework_decline