How to Land an AI Engineering Job in 2026

Webinar starts in

Lessons

view all ⭢lesson

Career PrepAI Accelerator- Job Guaranteed Program - Complete all program requirements and pass internal evaluations to unlock full job placement support - We apply to roles on your behalf, send direct outreach to recruiters and hiring managers, and manage follow-ups - Program Requirements - Complete all 8 approved mini projects on GitHub with full documentation and demo videos - Make at least one accepted open-source contribution - Finalize and get approval on your resume, LinkedIn, and portfolio - Internal Interviews - Pass a technical AI engineering interview - Pass a coding and system design interview - Pass a leadership, communication, and hiring-manager interview - Job Placement & Guarantee - Direct recruiter and hiring manager outreach with your projects, GitHub, and portfolio - Interview guarantee and job offer guarantee once all requirements are met - Every introduction positions you as a strong, credible AI engineer - Career Coaching & Support - GitHub review, resume reviews, and career coaching sessions - Mock interview practices with AI-powered critique loops - AI engineering interview question bank - Personalized career path guidance across AI Engineer, Model Engineer, and Research Engineer roles

lesson

AI AcceleratorAI Accelerator- Foundations & AI Applications - Pick a profitable AI niche and validate real demand using structured research - Learn AI product templates that connect user problems to proven UX flows and AI stacks - Build audience-first distribution strategies across social, content, communities, and funnels - Statistics, Evaluations & Synthetic Data - Set up Python tooling, Jupyter notebooks, and virtual environments - Build core AI intuition with vectors, tensors, matrices, NumPy, and probability - Understand transformer LLM applications across text, code, images, audio, and video - Build your first LLM inference API and learn evaluation-based AI engineering - Generate synthetic QA datasets and apply LLM-as-Judge workflows for calibration - Prompt Engineering, Embeddings & RAG - Master zero-shot, few-shot, chain-of-thought, and defensive prompt design - Learn tokenization, dense embeddings, and multimodal alignment with CLIP - Build RAG pipelines with chunking, vector databases, semantic retrieval, and reranking - Explore n-gram language models and connect them to neural network foundations - Survey all forms of fine-tuning: domain, instructional, LoRA, RLHF, DPO, and GRPO - Transformers & Fine-Tuning - Implement self-attention, cross-attention, and modern inference optimizations - Fine-tune embeddings with triplet loss, contrastive tuning, and hard-negative mining - Rebuild GPT-2 (124M) from scratch in PyTorch with advanced training mechanics - Fine-tune multimodal encoders for classification, regression, and domain-specific tasks - Pre-Training, Post-Training & Agents - Build and train full decoder-only transformer stacks with DDP and gradient accumulation - Design agent architectures with perception, planning, tool-use, and observation loops - Study Mixture-of-Experts routing, modern LLM architectures, and reasoning emergence - Implement advanced RAG with multi-hop reasoning, Graph RAG, and agentic retrieval - Apply DPO, RLHF, PPO, and GRPO for preference-based model alignment - Build AI code agents inspired by Copilot, Cursor, and Windsurf architectures - Case Studies & Production AI - Build real-time text-to-voice systems with sub-second latency and streaming pipelines - Design production-grade AI with guardrails, structured outputs, and scaling patterns - Explore text-to-video generation with Diffusion Transformers, Sora, and Wan 2.2 - Create browser agents with vision-to-code workflows and DOM-based reasoning - Learn how to stay current with AI trends, frameworks, and emerging techniques - Hands-On Projects & Community - Complete 50+ code exercises and 4 competition-based mini projects - Build and demo a personal or professional AI project - Attend weekly live lectures, Q&A sessions, and group coaching calls - Join an in-person mastermind event in Miami for collaboration and networking

lesson

Orientation — Technical KickoffAI Accelerator- Jupyter & Python Setup - Understanding why Python is used in AI (simplicity, libraries, end-to-end stack) - Exploring Jupyter Notebooks: shortcuts, code + text blocks, and cloud tools like Google Colab - Hands-On with Arrays, Vectors, and Tensors - Creating and manipulating 2D and 3D NumPy arrays (reshaping, indexing, slicing) - Performing matrix operations: element-wise math and dot products - Visualizing vectors and tensors in 2D and 3D space using matplotlib - Mathematical Foundations in Practice - Exponentiation and logarithms: visual intuition and matrix operations - Normalization techniques and why they matter in ML workflows - Activation functions: sigmoid and softmax with coding from scratch - Statistics and Real Data Practice - Exploring core stats: mean, standard deviation, normal distributions - Working with real datasets (Titanic) using Pandas: filtering, grouping, feature engineering, visualization - Preprocessing tabular data for ML: encoding, scaling, train/test split - Bonus Topics - Intro to probability, distributions, classification vs regression - Tensor intuition and compute providers (GPU, Colab, cloud vs local)

lesson

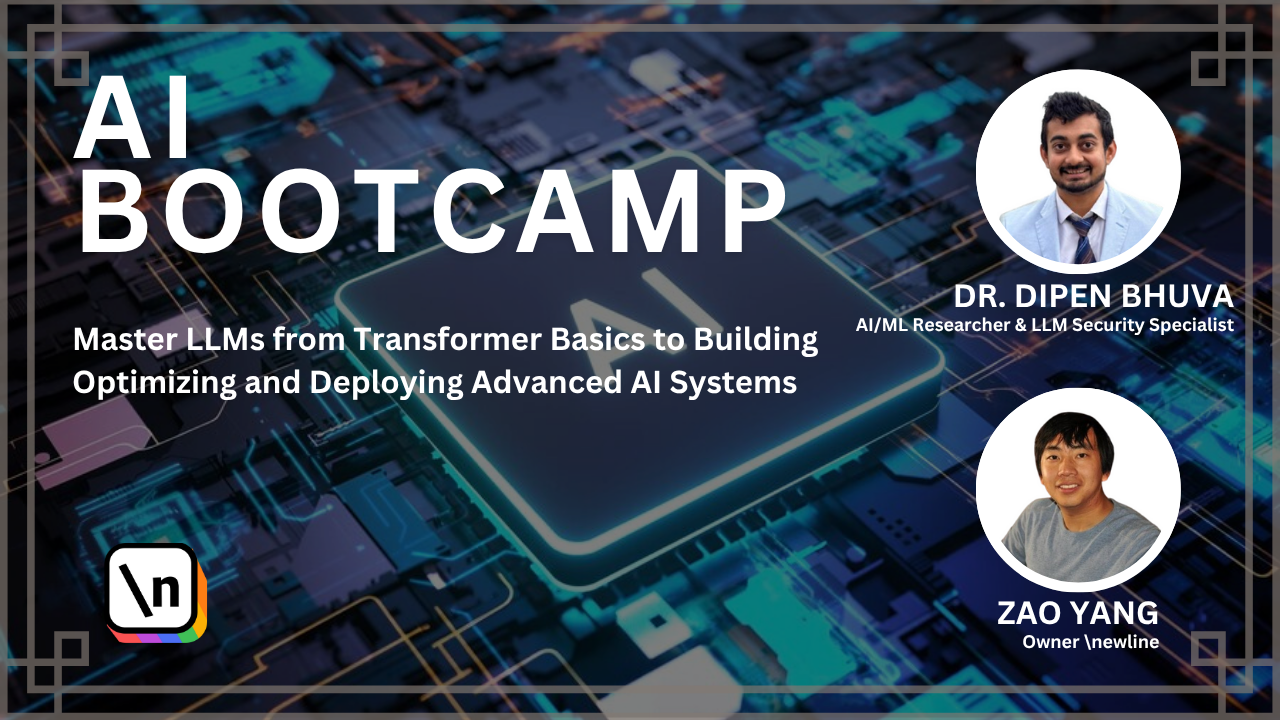

Orientation — Course IntroductionAI Accelerator- Meet the instructors and understand the support ecosystem (Circle, Notion, async help) - Learn the 4 learning pillars: concept clarity, muscle memory, project building, and peer community - Understand course philosophy: minimize math, maximize intuition, focus on real-world relevance - Set up accountability systems, learning tools, and productivity habits for long-term success

lesson

Staying Current with AI (Research, News, and Tools)AI Accelerator- Track foundational trends: RAG, Agents, Fine-tuning, RLHF, Infra - Understand tradeoffs of long context windows vs retrieval pipelines - Compare agent frameworks (CrewAI vs LangGraph vs Relevance AI) - Learn from real 2025 GenAI use cases: productivity + emotion-first design - Stay current via curated newsletters, YouTube breakdowns, and community tools

lesson

Career Prep — Roles, Interviews, and AI Career PathsAI Accelerator- Break down roles: AI Engineer, Model Engineer, Researcher, PM, Architect - Prepare for FAANG/LLM interviews with DSA, behavioral prep, and project portfolio - Use ChatGPT and other tools for mock interviews and story crafting - Learn how to build a standout AI resume, repo, and demo strategy - Explore internal AI projects, indie hacker startup paths, and transition guides

lesson

RAG Hallucination Control & Enterprise SearchAI Accelerator- Explore use of RAG in enterprise settings with citation engines - Compare hallucination reduction strategies: constrained decoding, retrieval, DPO - Evaluate model trustworthiness for sensitive applications - Learn from production examples in legal, compliance, and finance contexts

lesson

LLM Production Chain (Inference, Deployment, CI/CD)AI Accelerator- Map the end-to-end LLM production chain: data, serving, latency, monitoring - Explore multi-tenant LLM APIs, vector databases, caching, rate limiting - Understand tradeoffs between hosting vs using APIs, and inference tuning - Plan a scalable serving stack (e.g., LLM + vector DB + API + orchestrator) - Learn about LLMOps roles, workflows, and production-level tooling

lesson

Positional Encoding + DeepSeek InternalsAI Accelerator- Understand why self-attention requires positional encoding - Compare encoding types: sinusoidal, RoPE, learned, binary, integer - Study skip connections and layer norms: stability and convergence - Learn from DeepSeek-V3 architecture: MLA (KV compression), MoE (expert gating), MTP (parallel decoding), FP8 training - Explore when and why to use advanced transformer optimizations

lesson

Text-to-SQL and Text-to-Music ArchitecturesAI Accelerator- Implement text-to-SQL using structured prompts and fine-tuned models - Train and evaluate SQL generation accuracy using execution-based metrics - Explore text-to-music pipelines: prompt → MIDI → audio generation - Compare contrastive vs generative learning in multimodal alignment - Study evaluation tradeoffs for logic-heavy vs creative outputs

lesson

Building AI Code Agents — Case Studies from Copilot, Cursor, WindsurfAI Accelerator- Reverse engineer modern code agents like Copilot, Cursor, Windsurf, and Augment Code - Compare transformer context windows vs RAG + AST-powered systems - Learn how indexing, retrieval, caching, and incremental compilation create agentic coding experiences - Explore architecture of knowledge graphs, graph-based embeddings, and execution-aware completions - Design your own multi-agent AI IDE stack: chunking, AST parsing, RAG + LLM collaboration

lesson

Preference-Based Finetuning — DPO, PPO, RLHF & GRPOAI Accelerator- Learn why base LLMs are misaligned and how preference data corrects this - Understand the difference between DPO, PPO, RLHF, and GRPO - Generate math-focused DPO datasets using numeric correctness as preference signal - Apply ensemble voting to simulate “majority correctness” and eliminate hallucinations - Evaluate model learning using preference alignment instead of reward models - Compare training pipelines: DPO vs RLHF vs PPO — cost, control, complexity